Headlines

-

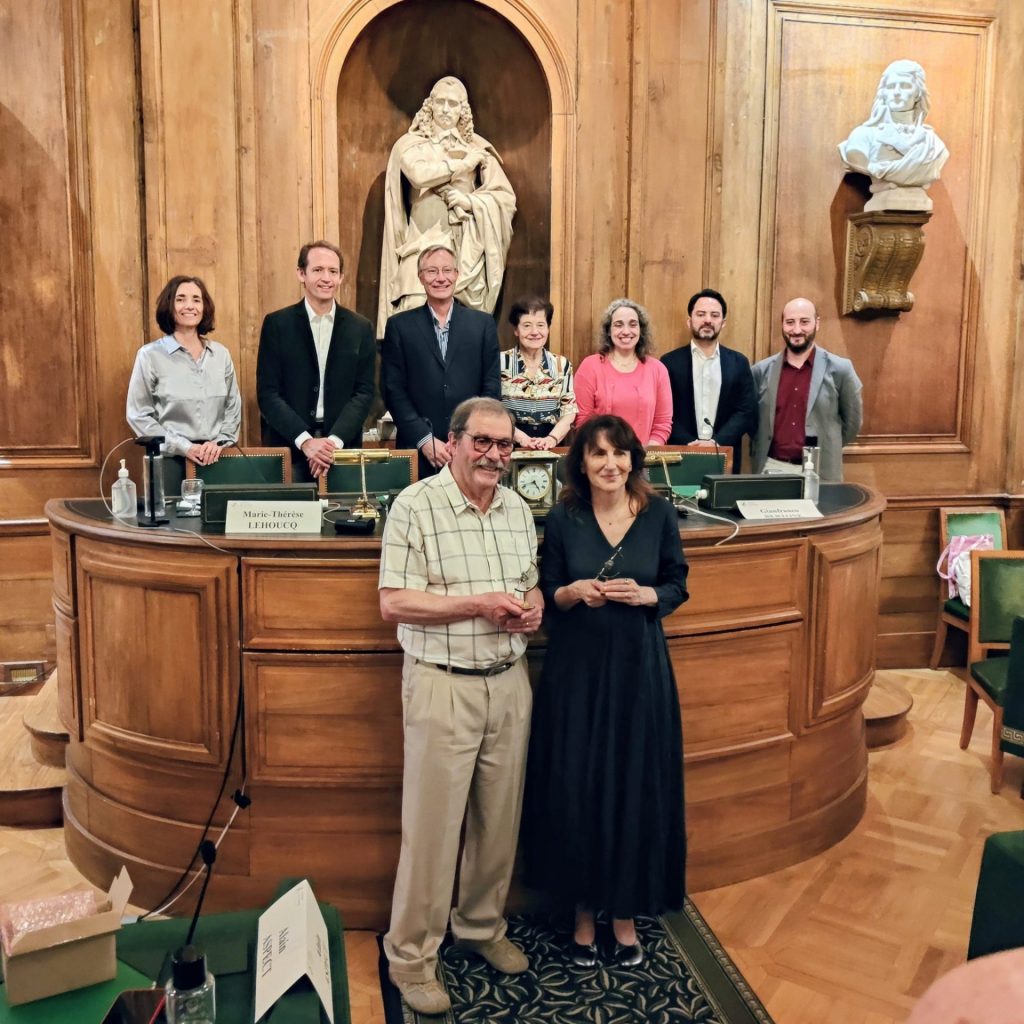

Cosmos Prize

Group picture of the prize winners Alain Aspect and Ersilia Vaudo with their tropy. On the back standing from left to right : Marie-Thérèse Lehoucq, Marco Cirelli, Pierre Vanhove, Françoise Combes (president of the academy of science), Ana Teixeira, Gianfranco Bertone et Pasquale Serpico. Photography taken by M. Olivier Hagneré, teacher at the lycée Elie…

Agenda

8 June 2026

11h00 – 12h30

Long-time, large-distance asymptotics of correlation functions of the Lieb–Liniger modelin thermal and non-thermal equilibrium

Salle Claude Itzykson, Bât. 774

9 June 2026

14h00 – 16h00

Chiral Dynamics: Do Symmetries Have To Break?

Salle Claude Itzykson, Bât. 774

12 June 2026

10h00 – 12h00

Statistical Physics of Ecosystems 4/5

Salle Claude Itzykson, Bât. 774

16 June 2026

11h00 – 12h00

Séminaire de vulgarisation : Le paradoxe de l’information des trous noirs (titre et sujet à confirmer)

Salle Claude Itzykson, Bât. 774

- Sylvain Ribault

- Angèle Lochet

Aucun événement